Twitter is a great tool for keeping tabs on your friends and on things that interest you. It is also a great tool for being a tool.

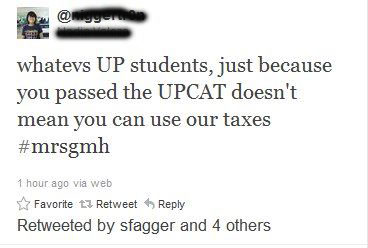

Like so:

Blacked out her name because she's clearly a minor whose identity should be protected. At least we hope she is a minor.

Now, the UP College Admission Test (UPCAT) is sort of a big deal for many high school seniors, and the pressure of getting in is too much for some people. Still, that is a weak excuse for indulging in some of the most ill-informed sour graping in the history of talking shit.

Actually, @N___________, Republic Act 9500 or the University of the Philippines Charter of 2008 says passing the UPCAT does mean UP students can use your taxes. So does Republic Act 10147 and probably every General Appropriations Act passed since UP became a state school. Shit, government subsidy to UP even predates the Republic probably. More than enough time for you to understand that state schools are paid for, in part, by taxes.

Unfortunately, for you, @N___________, “just because” people pass the UP Law Aptitude Exam or get into the UP College of Medicine will also mean they get to use your taxes.

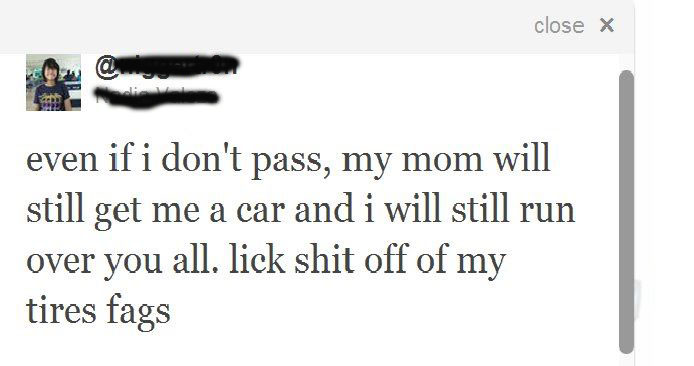

Well, at least there’s that. Try not to drive through floods while doing that, though. You’re obviously even less informed than that other guy. And at least he got into UP.

—

Thx for the tip, Indolent friend Mrs. Dennis, Ang Taong Lobo!

25 Comments